What if your AI agent could manage other AI agents, you could watch every conversation in real time from your phone, intervene anytime, and none of your API keys would ever be exposed? That's HiClaw.

I set up HiClaw with local models to show you how multi-agent orchestration actually works. It's an open-source project built on top of OpenClaw that solves a concrete problem: complex tasks overwhelm single agents.

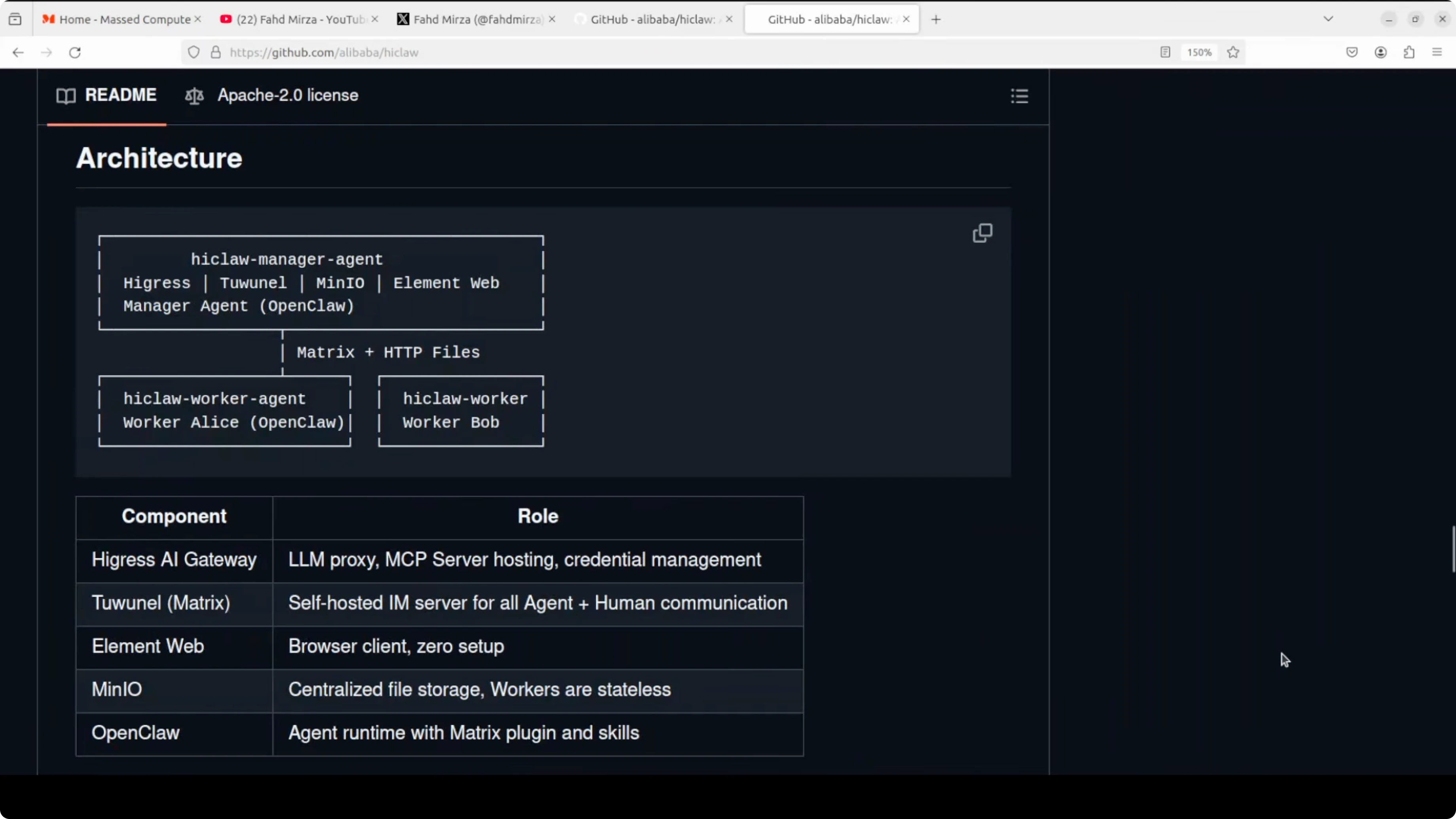

HiClaw introduces a manager-worker architecture where a manager agent coordinates multiple worker agents through Matrix chat rooms. You observe everything in real time and can jump in wherever needed.

What HiClaw Is and What Problem It Solves

HiClaw is a multi-agent operating system built on OpenClaw. The problem it solves is straightforward: single agents hit walls on complex tasks.

The solution? A manager that coordinates specialized workers. Each worker handles part of the project, the manager assigns tasks and monitors progress.

The security model is solid: workers never see your real API keys. They only hold consumer tokens, and your actual credentials never leave the Higress gateway.

One command installs everything: AI gateway, Matrix server, file storage, web client, and the manager agent. Your entire AI team runs on your own machine.

Need Help with AI Automation?

Get in touch for a consultation on implementing multi-agent systems in your business.

Requirements for Installing HiClaw

For this guide I used an Ubuntu system with an NVIDIA RTX 6000 GPU and 48 GB of VRAM. The model I'm running is GLM 4.7 Flash on Ollama.

Minimum requirements:

- Docker installed and running

- Ollama running (if using local models)

- NVIDIA drivers and CUDA (if using GPU)

Verify Docker:

docker --versionCheck GPU (if available):

nvidia-smiList available Ollama models:

ollama listThe default OpenAI-compatible endpoint for Ollama is:

http://localhost:11434If you haven't set up Ollama yet, read the complete guide on how to configure OpenClaw with Ollama.

Installing HiClaw Step by Step

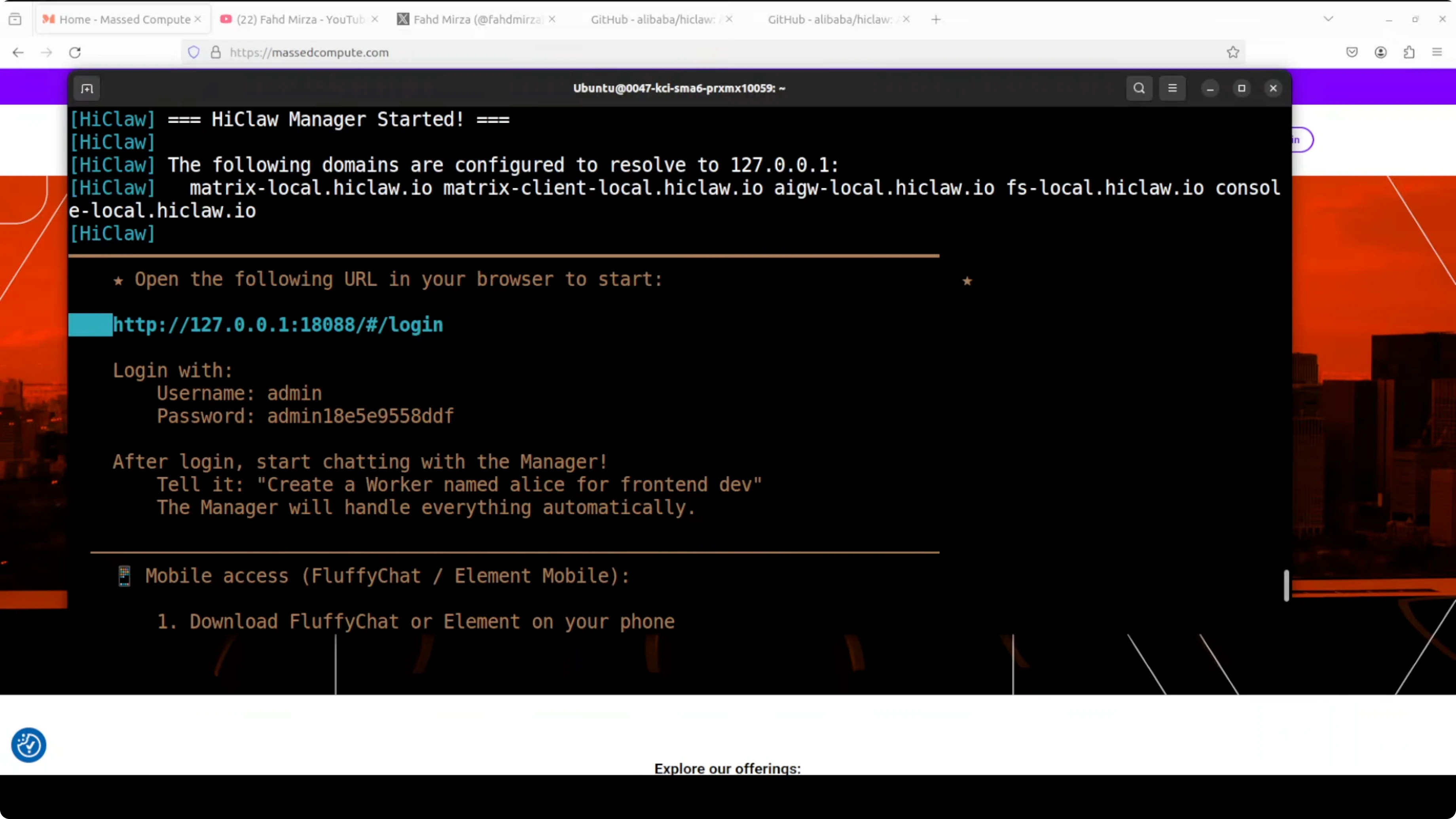

I run the installer script that installs HiClaw and all its components. The wizard asks for a few inputs and I choose the manual route.

Select English as language. Choose Manual setup instead of Quick Start, and skip their paid coding plan. Pick the OpenAI-compatible API option.

When asked for the base URL, I enter my Ollama OpenAI-compatible endpoint. If you're running it on another port or URL, adjust accordingly.

Enter your Ollama model ID. Since Ollama doesn't require an API key locally, I put any random value in the key field.

Set a username and password. I go with admin and let it autogenerate the password.

Keep the deployment local for your own use so you don't expose it to your network. For Docker containers and the Higress console, accept the default ports (you can change them if needed).

You'll see a local domain entry and ECS deployment option. In simple terms, that's for custom domains across services, but for local runs I accept the defaults.

Skip the GitHub token. Accept the default Docker volume locations.

For integration, there are two options: OpenClaw and Copa. I go with OpenClaw because that's what gives the full experience at the moment.

Skip E2E for now. Keep default values and home directory path, then let the installer pull images and start containers.

The script pulls a lot of Docker images and takes some time. After downloads finish, the manager container starts and the login page appears with autogenerated credentials.

First Login and Interface

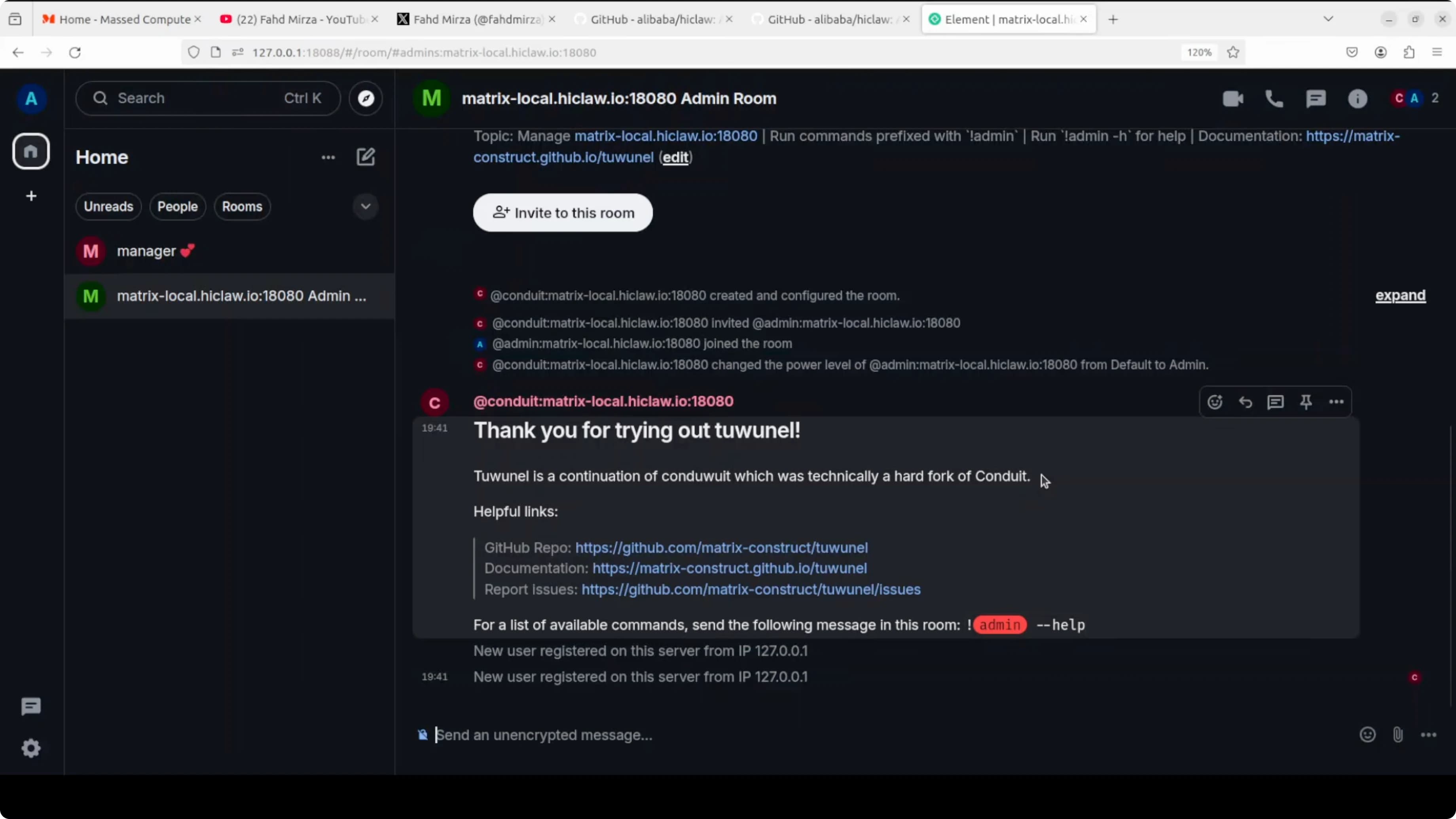

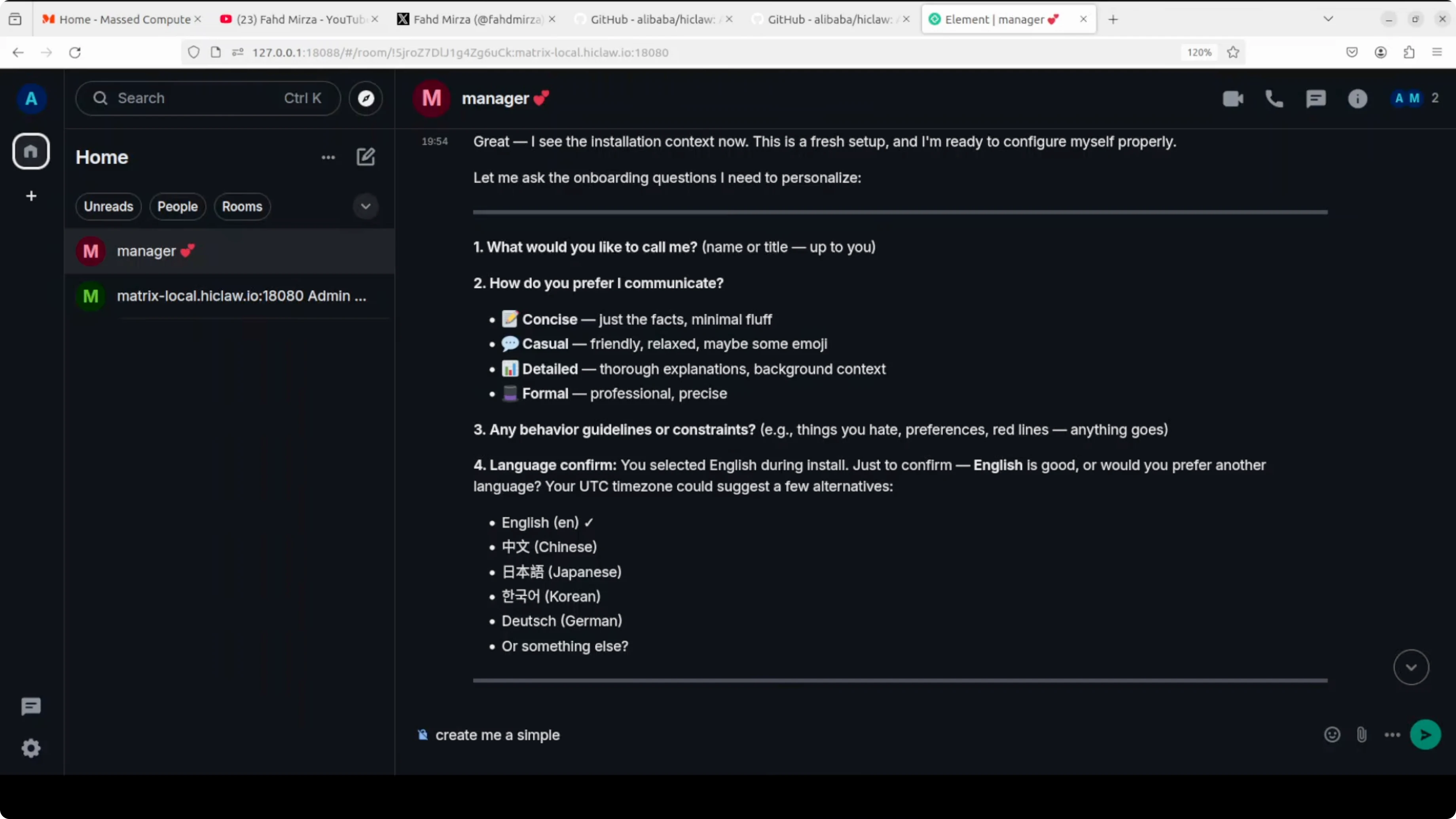

Open the login page in your browser, enter the credentials, and sign in. The welcome screen shows a Manager section where you can chat with the manager agent. It's already connected to OpenClaw.

You can deploy it on your LAN. You can also connect it with Copa, which is another agent framework. From here the experience matches what you see in OpenClaw and similar projects.

You can create multiple rooms and assign different agents to different rooms. For that, I wouldn't recommend base local models in production. Use hosted API models from OpenAI or Alibaba Model Studio if you need speed and reliability at scale.

Check Out My AI Projects

See the projects I'm working on and the technologies I use to automate with AI.

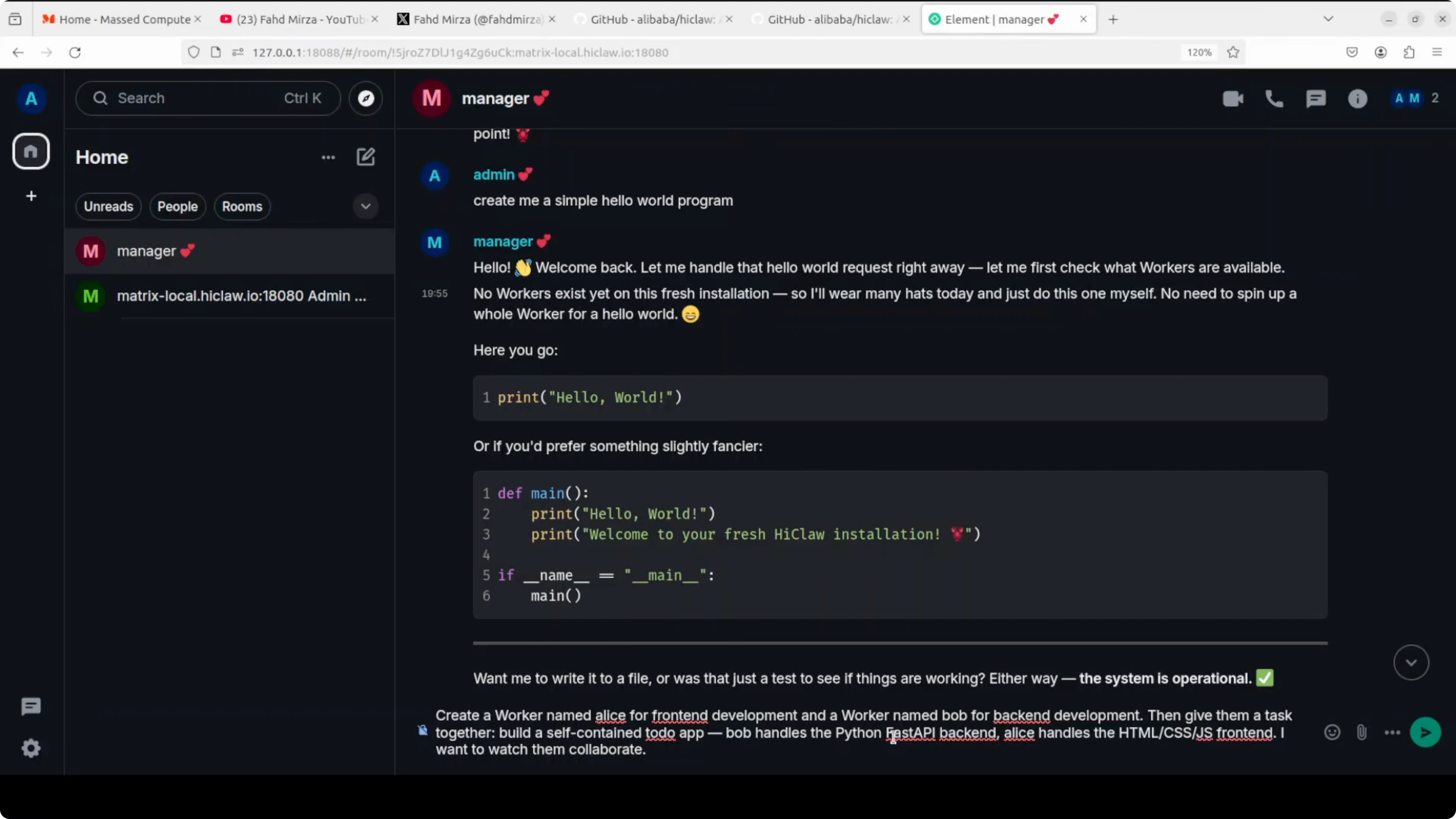

Quick Test: Hello World

I ask the manager to create a simple Hello World program to show how it works. It replies with code and documentation. You can see it's using OpenClaw behind the scenes.

The response comes quickly and the manager generates everything autonomously. It's a good test to verify the OpenClaw integration is working.

Multi-Agent Orchestration with the Manager

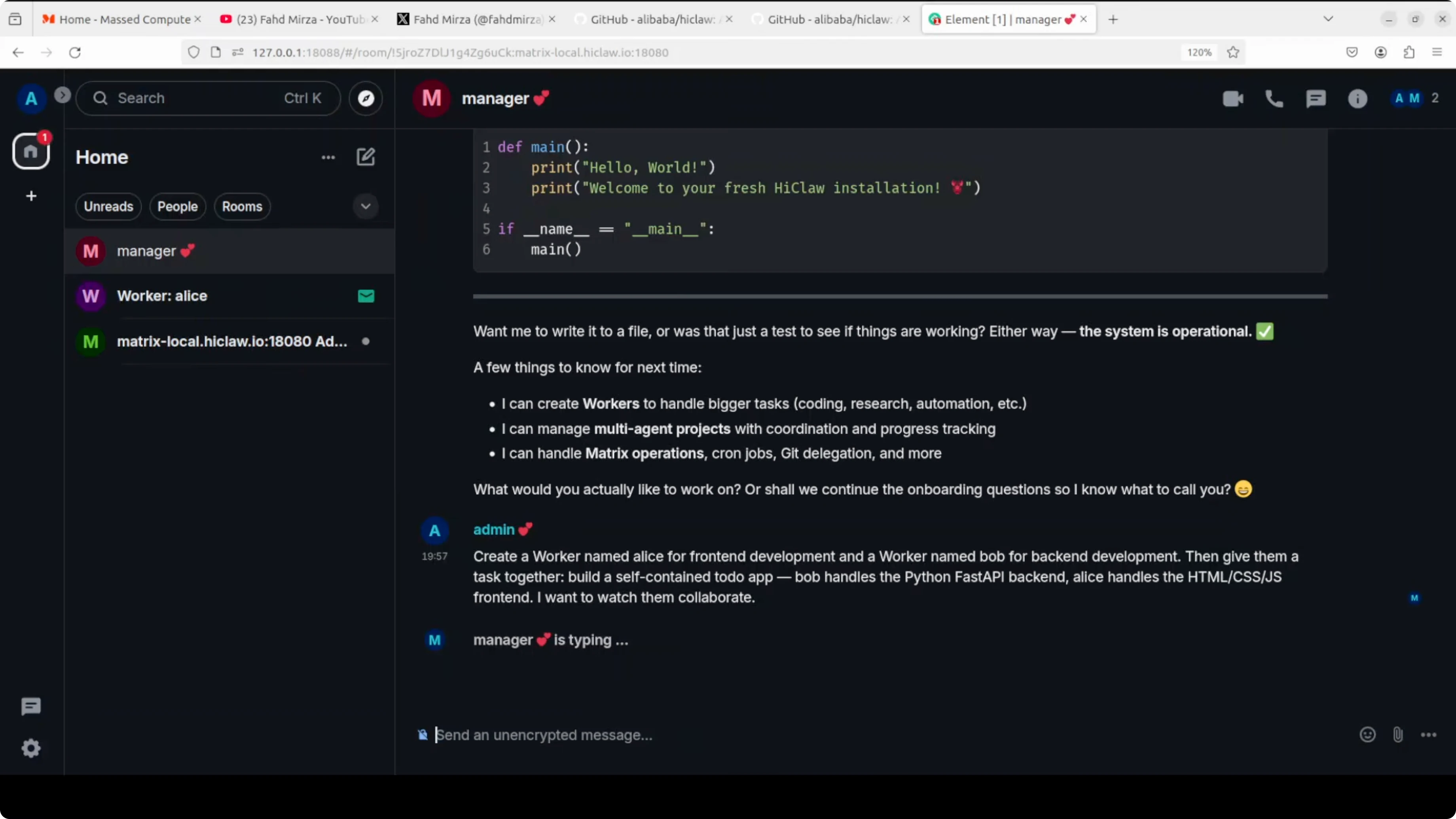

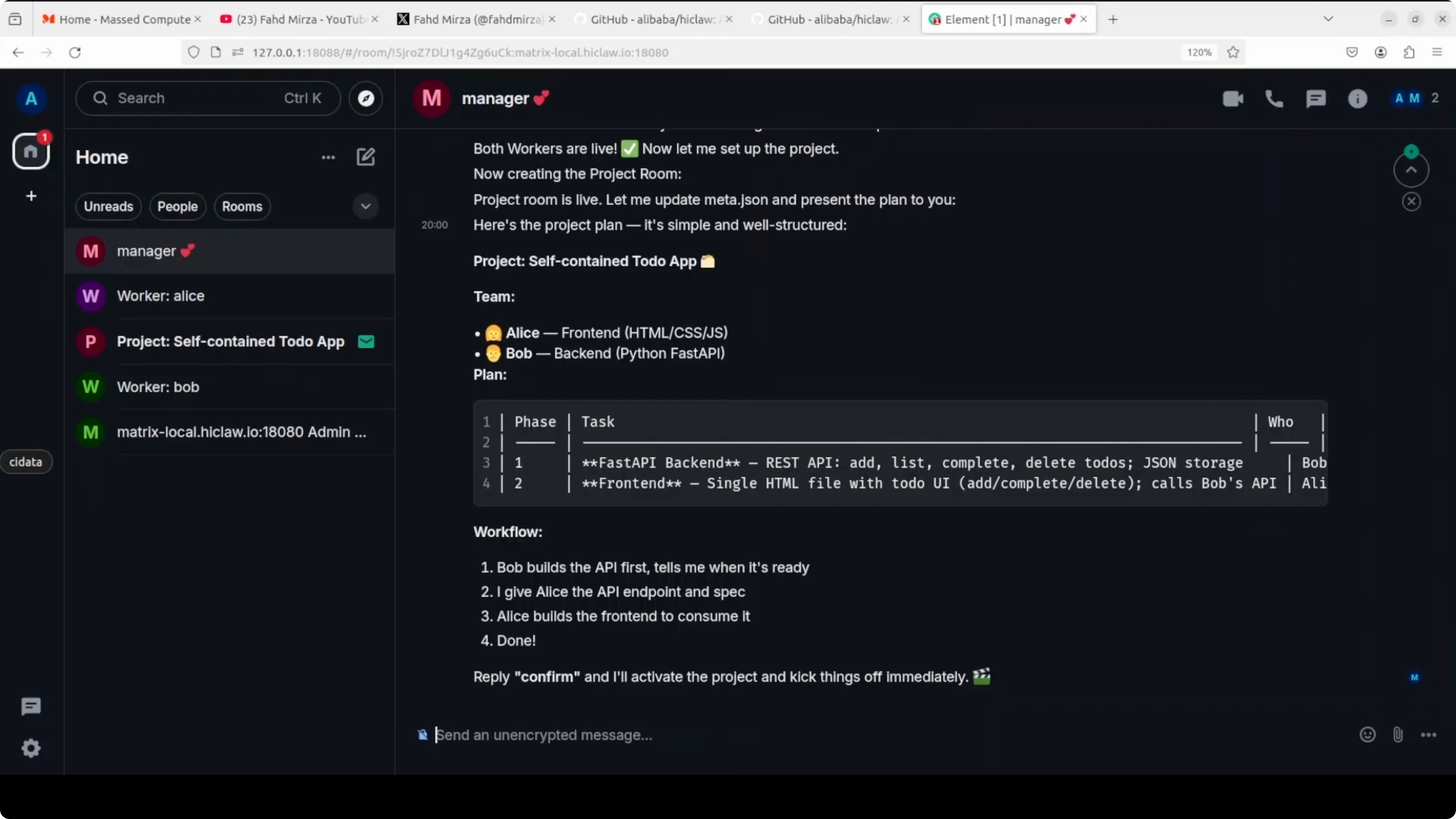

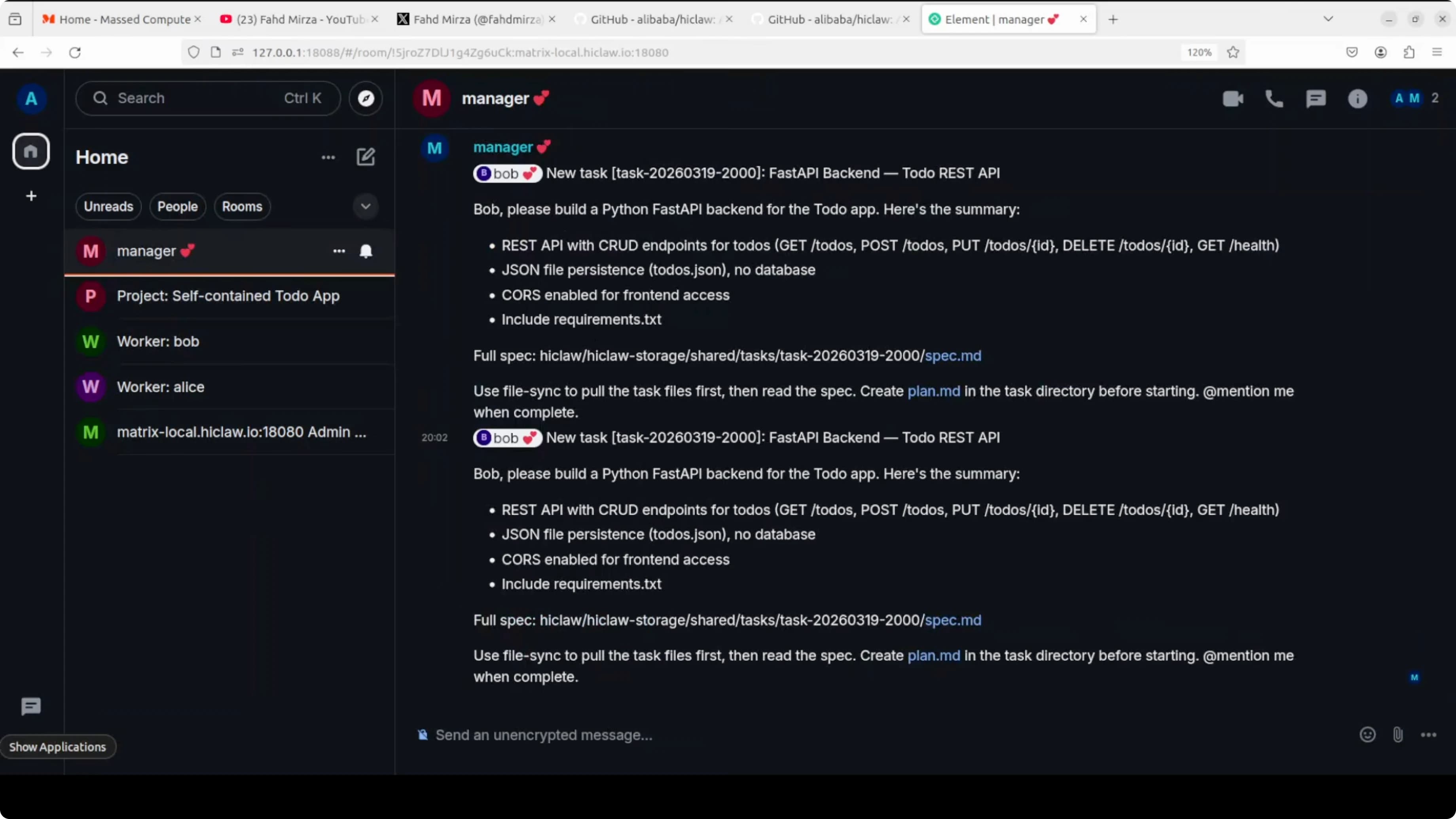

The real beauty of HiClaw is multi-agent orchestration and coordination. I ask it to create a worker named Alice for front-end development and a worker named Bob for back-end development. I assign them a shared task: build a self-contained to-do app.

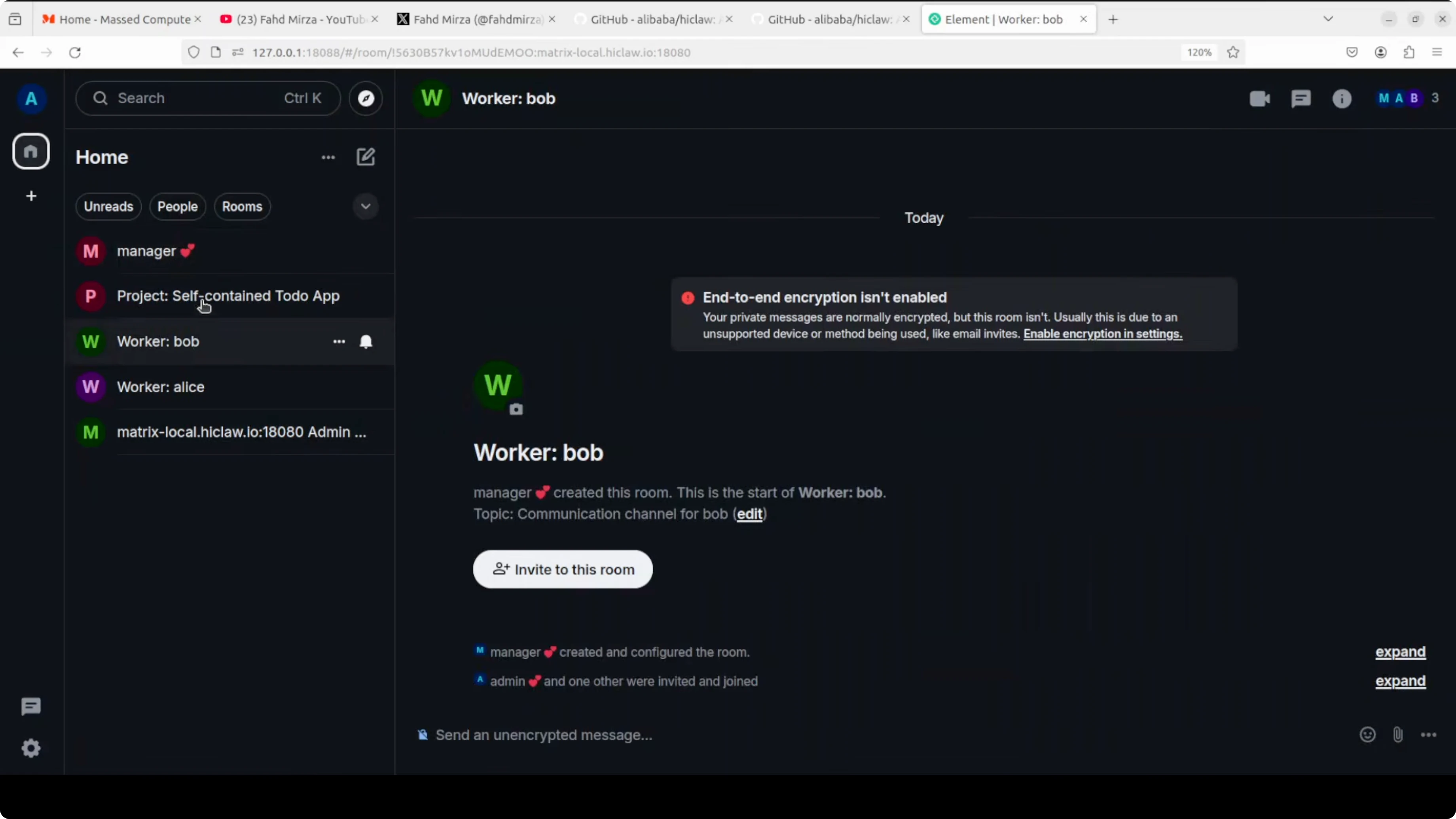

Bob handles a Python FastAPI back-end and Alice handles the front-end. I want them to collaborate. On the left side of the UI, the workers appear, and I join their rooms.

The manager explains that it can create workers and manage multi-agent projects. For production and deep work I suggest API-based models for performance. It then creates the project tasks and workflows and asks me to confirm.

I confirm, and the project spins up. The manager assigns Bob back-end tasks and tags him in the project room. Same thing happens for Alice on front-end tasks, and updates start pouring in across rooms.

There's a manager room, a Bob room, and a project room where agents talk to each other. Complex projects take longer, and if you're using API models, watch your cost and tokens.

The speed is a bit slow on my end, but the coordination features are strong on top of OpenClaw.

If your agents need to control the browser for web workflows, check out the hands-on example on browser automation with OpenClaw.

Models: Local vs Hosted

Local Models with Ollama

Local models are great for privacy and full control. They work well for prototyping, internal tools, and controlled experiments where you want to keep data local.

Pros: no external API keys, full data control, predictable cost.

Cons: slower responses, higher VRAM requirements, weaker reasoning on complex tasks compared to top hosted models.

Hosted API Models

Hosted models from providers like OpenAI and Alibaba Model Studio work well for production multi-agent projects. They shine on complex planning, tool use, and team coordination.

Pros: faster responses, better reliability, superior output quality.

Cons: ongoing cost, token limits, you must handle API key security.

Need AI Integration Support?

I can help you choose the right models and configure multi-agent systems for your needs.

Configuration Snippets

If your integration asks for an OpenAI-compatible base URL for Ollama, this is the typical local value:

# Base URL in the installer prompt

http://localhost:11434If you want to force Ollama to listen on a specific interface and port before running the installer:

# Optional - bind Ollama to localhost:11434

export OLLAMA_HOST=127.0.0.1:11434

ollama serveTo confirm your model ID for the installer:

# Find your exact model identifier

ollama list

# Pull a model if needed

ollama pull <your_ollama_model_id>Tips and Practical Notes

Workers only hold consumer tokens. Your real credentials stay behind the Higress gateway, which is the right security posture for multi-agent setups.

You can monitor every conversation in real time and step in when needed. Projects with more rooms and long task threads will take more time to converge.

Watch the costs: if you use hosted API models for complex projects, tokens add up quickly. Always monitor usage.

Real-time visibility: one of the best features is seeing how agents communicate with each other. It gives you transparency you normally don't have with single-agent systems.

Final Thoughts

HiClaw brings a manager-worker pattern to multi-agent projects and makes the full workflow visible and interactive. The one-step installer sets up the gateway, Matrix chat, storage, web client, and manager so your team runs fully local.

Use local Ollama models for demos and private tests, and switch to hosted APIs when you need speed and reliability for production multi-agent coordination.

When to use HiClaw:

- Complex tasks requiring specialized skills

- Projects where you want to observe and guide coordination between agents

- Setups where credential security is critical

- Environments where data privacy is a priority

When to avoid it:

- Simple tasks a single agent can handle

- Situations where speed matters more than coordination

- Limited budget (watch API costs on long tasks)

If you want to dive deeper into OpenClaw and build your first agent, read the guide on building your first OpenClaw skill.